Mounts =,Ĭommand = "python3 /app/src/pipelines/run. replace( hour = 0, minute = 0),Ĭontainer_name = "task_download_icon_eu",

So there you have it.Description = 'Process NWP forecasts ICON-EU', now Airflow calculates the next execution_date which would be 12:02 on the next day – for all the dags! (disregarding the start time of 11.00 /12:00 / 13:00) Airflow provides several trigger rules that can be specified in the task and based on the rule, the Scheduler decides whether to run the task or not. When Airflow’s scheduler encounters a DAG, it calls one of the two methods to know when to schedule the DAG’s next run.Airflow realizes, that it has been missing some runs and executes the dag at 12:02.we activated all the above mentioned dags apparently at around 12:02.we deployed the dags, and set the start_date at a point in time in the past.optional background information: start date just defines the starting time interval, on when the dag should be executed.

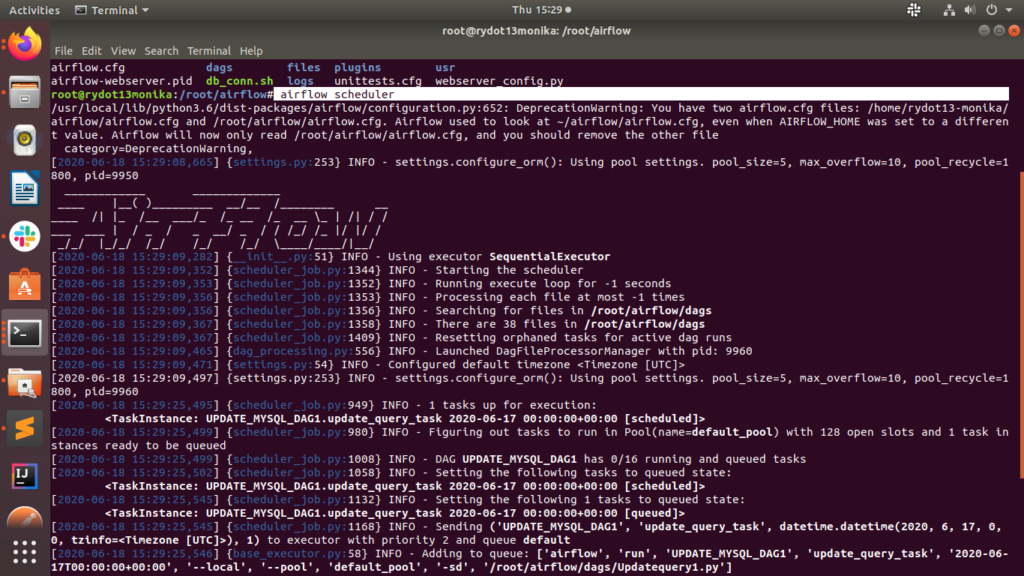

Tasks Executed per 10minute Interval in our Production Airflow Environment 6. The below image shows the number of tasks completed every 10 minutes over a twelve hour period in our single largest Airflow environment. If there is no last execution_date (because it is the first run) it will run at the specified start_date instead Thanks to our randomized schedule implementation, we were able to smooth the load out significantly. “Note that Airflow simply looks at the latest execution_date and adds the schedule_interval to determine the next execution_date” – taken from the official docs.if you set a start_date in the past with a specific time interval, Airflow will try to fill up and execute all missing runs immediately after you activate the dag.Once you understand the Airflow schedule interval better. With its ETL mindset initially, it could take some time to understand how the Airflow scheduler handles time interval. Airflow is a complicated system internally but straightforward to work with for users. However, when I restart Airflow webserver and. I hope this article can demystify how the Airflow schedule interval works. To solve this kind of mystery you need to know 2 important things about the airflow scheduler, especially about the start_date parameter. When I schedule DAGs to run at a specific time everyday, the DAG execution does not take place at all. Those dags with the prefix 2000, 30 were deployed and should run 1 hour apart from each other. Moreover they do not start nearly at the same time as indicated by start_date even if you consider different time zones. Airbnb open-sourced Airflow early on, and it became a Top-Level Apache Software Foundation project in early 2019. The start_date configured for the dags Observation:Īirflow dags are running almost at the same time, although they are clearly scheduled 1 hour apart from each other. I am new to Airflow and wanted to ask how to schedule a airflow workflow 2 times in a day e.g. Apache Airflow (that is, Air BnB work flow) was developed by Airbnb to author, schedule, and monitor the company’s complex workflows. Note that Airflow simply looks at the latest executiondate and adds the scheduleinterval to determine the next executiondate. Daily jobs have their startdate some day at 00:00:00, hourly jobs have their startdate at 00:00 of a specific hour. For that we defined the start_date at 11:30 (12:30 / 13:30 repectively). The best practice is to have the startdate rounded to your DAG’s scheduleinterval. We have a job chain of three dags which are scheduled for daily execution and should run in succession. We observed a problem where dags did not run at the specified time at all but consistently started at a random time.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed